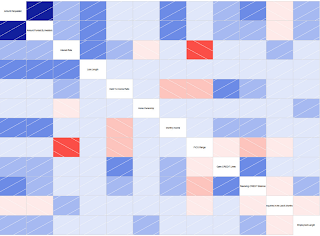

This is the time of the year when pundits make their 2015 predictions. But to make predictions about Data Science, shouldn't one use data? Here are four charts from Google Trends that show trending performance of various data science technologies. Apache Spark really is overtaking Apache Hadoop.

In this R vs. IPython Notebook chart, we should just gather the trends rather than the absolute magnitudes. "R" is notoriously difficult to Google for, and "R Cran" is just one of the many tricks R users employ to Google for information about R. And, sadly, Google Trends has no way to additively combine search trends together (e.g. "R Cran" OR "R Project"). But, we can still see that IPython Notebook is skyrocketing upward while R is sagging.

This is a little hard to read and requires some explaining. The former name for "Apache Storm" was "Twitter Storm" when Twitter first open-sourced Storm onto GitHub in 2011. But "Twitter Storm" has another common usage, which is a "storm of tweets" such as about a celebrity. I'm guessing about half the searches for "Twitter Storm" are for this latter usage.

The takeaway is that Storm got a two-year head start on Spark Streaming and has been chugging away ever since. Part of the reason is that Spark Streaming, despite the surge in popularity of base Spark, had a lot of catching up to do to Storm in terms of graceful handling of errors and graceful shutdown/restart. A lot of that is addressed in the new HA Spark Streaming features introduced in Spark 1.2.0, released a week ago.

But the other interesting trend is that the academic term "complex event processing" is falling away in favor of the more industry-oriented terms "Storm" and "Spark Streaming".

People forget that "Machine Learning" was quite popular back in the dot-com era. And then it started to fade. That is, until Geoffrey Hinton's invention of deep learning in 2006. That seems to have lifted the popularity of machine learning in general. Well, at least we can say there's a correlation.

The other interesting thing is the very recent (within the past month) uptick in interest in DeepMind. Of course there was a barrage of interest in October when the over-hyped headlines blared "mimics human". But I think people only this past month started getting past the hype and started looking at the actual DeepMind paper which is interesting because it shows how they added state to a neural network, and that that is how they achieved "short term memory".